Risk Management, meet Design Thinking.

Our modern society - that is, your life - depends on incredibly complex socio-technical systems.

In the past, we got away with incrementally improving what came before.

But now, we have started radically redesigning our energy, transportation, healthcare, communication and food systems.

That is both very necessary, and very risky.

Welcome to the fun world of engineering programs!

Hello world, my name is Josef Oehmen. I am an associate professor at the Technical University of Denmark, and this is my website!

You may want to check my “official” work website at http://risklab.dtu.dk for the latest updates. I am a little lazy in updating this personal website.

Upcoming Risky Events

All the fun you have missed :-(

Smart systems are good, smart resilient systems are much better!

My Latest Risky Wisdom

See all my wisdom. You find my academic publications at DTU and on Google Scholar.

Some of my risky projects

What can network science tell us about robustness and interations in complex engineering projects?

Why I do what I do

I believe we need to get three things right with the systems we design: Productivity, because we love our standard of living. Sustainability, because we only have one planet. Resilience, because our lives depend on it.

As you may have noticed by now, I think we ought to do more about the resilience side of things.

There are three critical categories of uncertainty we struggle with: We don’t know how well future technologies will work. We don’t know how well our organizations will perform. And we struggle to even articulate dependable goals and requirements to work towards.

My work looks for the right balance of social and technical factors when mitigating critical risks.

Design Thinking, more specifically Engineering Systems Design, is the foundation of my work, because I love structured creativity.

The areas that my work impacts most are project management and systems engineering, because this is where humans come together to solve problems and do great things.

And I am a fan of Lean Management, because you can never focus too much on value.

A really short biography

(I probably sent you here to copy it for your event program)

(And yeah, as my wife pointed out to me, it’s not really that short)

Josef Oehmen, Ph.D., MBA, is an Associate Professor at the Technical University of Denmark (DTU). His research focuses on designing and managing large-scale (systems) engineering programs, especially on how to deal with risk, uncertainty and ignorance. He is the founder and coordinator of the Engineering Systems RiskLab at DTU (http://risklab.dtu.dk). Prior to DTU, Josef worked at MIT and ETH Zurich (where he also obtained his PhD).

He has won numerous awards and scholarships, including regular keynote speaker invitations, teaching awards, the Shingo Prize for his work on Lean Engineering Program Management (2013), an appointment as Visiting Professor at the Technical University of Munich (2012), DAU Research Competition Winner (2012), INCOSE collaboration award (2012), a journal paper of the year award (2011), and the research award of the Department for Management, Technology and Economics at the ETH Zurich in 2009.

He co-founded DTU's Engineering Systems Group as well as MIT’s Consortium on Engineering Program Excellence (CEPE), co-chairs the INCOSE Lean Systems Engineering Working Group, and founded and co-chairs the Design Society’s Special Interest Group on Risk Management Processes and Methods. He is a member of the board of IDA Risk.

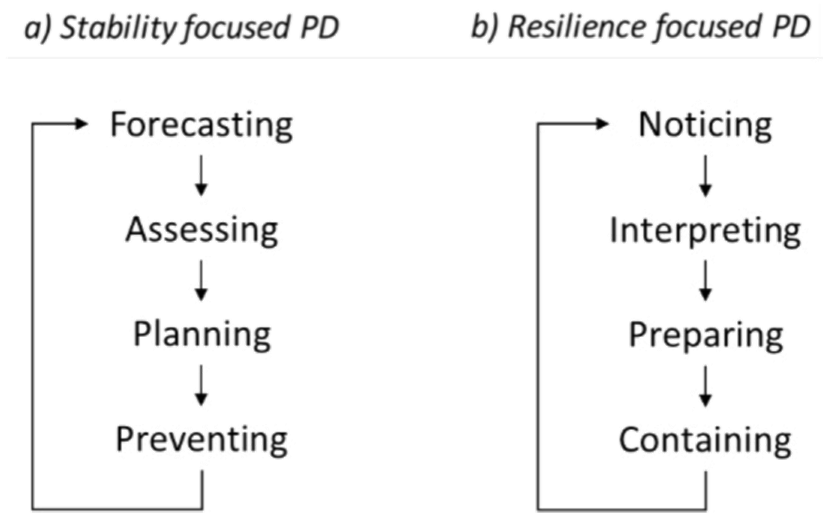

Josef's current research focuses on developing and implementing paradigm-shifting risk management techniques in the design, construction and operation of Engineering Systems. He brings a design and risk management perspective to improving how we collaborate in project management and systems engineering. He works on advanced (non probabilistic and ML-based) risk quantification methods, principles of resilient engineering and project execution, lean risk management, and risk-informed leadership in engineering organizations.

Me, seriously managing some serious risk (artist impression)